This article is more than two years old and may no longer be accurate.

Different parts of the tongue are responsible for different flavours. Touching the tip of your tongue to a lollipop will create the sweetest sensation and rolling a sour Skittle into your cheek will make the sour pop.

That was what I learned in 2002. The teacher handed six-year-old me an assortment of food — sweet, sour, bitter, and salty — to test out on different areas of the tongue. Then, she handed me a tongue map and told me that different regions responded differently to different flavour groups. Did I taste a difference? Who knows? My teacher told me it was true, so I nodded yes, and went along, eager to get the answers right.

The tongue can be mapped into zones.

The tip of the tongue responds to sweet flavours. The sides right behind it respond to salty flavours. Behind that is sour, then at the back, bitter.

Or maybe sour comes second and salty third? Or maybe salty is at the very tip?

A quick google search of ‘tongue map’ will clear it up, right?

Wrong.

If you followed the link, you can see multiple versions of the tongue map. The information isn’t consistent because the tongue map, as we know it, is based on a lie.

The tongue map is based on the work of German scientist David P. Hänig. In a 1901 paper, he found tiny differences between the threshold for certain tastes on different areas of the tongue.

There are three key things to keep in mind.

- The differences were minute, certainly not enough difference for a grade-two student to pick from sampling a lollipop and a lemon wedge.

- The differences were in the amount of flavour required for the taste buds to recognize its presence — not the intensity of the flavour once the tongue tasted it.

- Hänig published the paper in 1901. Since then, scientists have proven that receptors responsible for different flavours are scattered throughout the tongue.

Hänig didn’t consider these things, so he drew a map, dividing flavour zones. That map became the modern taste map. Over time people took this map out of context, stripped it of nuance, and perpetuated the oversimplified idea that different parts of the tongue are responsible for different tastes. This was the origin of the tongue map.

And so, because of a German scientist at the turn of the century, I held sweet things on the tip of my tongue and told myself they were sweeter. Crazy.

I can blame Hänig and my elementary teacher (who was wonderful by the way) for believing the tongue map was real in the first place, but not for clinging to that belief. I required multiple credible sources to finally accept that it was false. The science lesson the elementary school taste test was part of is a distant memory. But the tongue map was a part of my arsenal of facts, something that had a familiar ring to it. For that reason, I had to check, and double check, before dismissing it as false.

We live in a world where evidence is widely accessible. A Google search can reveal a scientific answer, broken down to the basics for easy consumption. Books and papers and studies from around the globe are available, yet the tongue map made it into my classroom.

The tongue map is not the only commonplace lie. Here are some other greatest hits:

- Don’t touch a baby bird if it falls out of the nest. If you do, mama bird will smell you and won’t care for the baby. (False: Birds don’t use smell to identify their young).

- Alcohol kills brain cells. (Uh, nope. No brain cells were killed last Friday. Large amounts of alcohol merely prevent new cells from growing. You can’t kill what isn’t alive yet.)

Cool facts, right?

Here are some other ‘cool’ facts.

- Climate change is real and caused by human-made factors. (Unless you’re talking to a member of the 11 percent of Canadians surveyed in 2018 that claim there is no connection.)

- Black people and white people feel pain the same way and should receive the same treatment. (Unless you’re consulting literature present in medical schools to this day that contain information to the contrary. This false information has no place in 2020.)

See, some people believe you shouldn’t touch a baby bird, and some people believe black people should be given less pain medication.

Both groups are misinformed — one is dangerous.

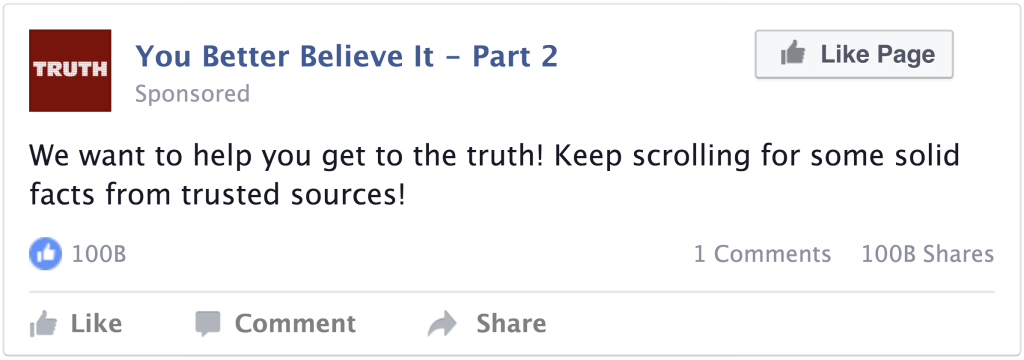

Now we know that the truth, like a clickbait article, isn’t always as advertised. Let’s look at a more trusted source of information to find out why.

A lie will go round the world while truth is pulling its boots on.

Charles Haddon Spurgeon

A lot can be explained by the illusory truth effect.

The more something is repeated, the more likely we are to think it’s true. If something is repeated often, we are more likely to believe it. If we hear something multiple times, we are less likely to question its validity.

It’s not quite as simple as repeating a statement three times, but, in tandem with other factors, repetition has a huge impact on the way we process information. We are more inclined to believe a repeated lie than a one-off truth. (Unkelbach and Rom)

There’s no logical reason to believe repeated information over new information. As one researcher says, “It’s like buying several copies of the morning paper to ensure that the content is true.” (Unkelbach and Rom) However, there are some theories about why we do it.

One reason is processing fluency. In simple terms, the more we hear something, the faster the brain can process it. (Unkelbach and Rom) This means the information is clearer. This theory also explains why we are more likely to believe things that rhyme. Our brains pick up patterns and process them faster. (Unkelbach and Rom)

Another reason is relational theory, the idea that memories provide meaning and context to a piece of information. The more memories and associations people have with a piece of information, the less likely they are to question its validity. (Unkelbach and Rom) Therefore, repetition creates trust in a statement.

Patterns, and our willingness to see them, are key ingredients in the adoption of a lie. Recognizing patterns is a survival technique we developed to identify predators and react quickly to our environment. We can refer to this occurrence as patternicity. Outside of a survival situation, patternicity can account for our willingness to believe in everything from alien abductions to the luckiness of a particular number.

Patternicity helps us avoid surprises. For our ancestors, it was better to be overcautious, to create something out of insignificance, than to dismiss something significant and possibly face danger. Turning the rustling of grass into an attacking predator, or a collection of coincidences into proof of alien abduction, is a method of organization for information.

It is super helpful right before a cougar attack, and super unhelpful when surfing the internet.

We are primed to make connections and draw patterns to validate what we see or hear. Once those patterns are formed, processing contradictory information becomes harder. We WANT to believe.

Patternicity can be explained by the right side of the brain and dopamine. Patterns are processed in the cerebral cortex, which is also responsible for speech and higher-level thinking. It is mainly processed on the right side, the part of the brain that performs creative tasks. Thus, pattern-making is less of a rational process and more an emotional response to stimuli. Pattern-finding is also heightened by increased dopamine. Give a person cocaine (or another stimulant) and watch them connect dots much more quickly.

Pattern-seeking behaviour can be incredibly useful. It’s how we string together information to create new ideas. It can also lead us to believe lies.

To learn more about patternicity, the brain, dopamine, and our caveman tendencies, watch “The Pattern behind Self-Deception” by Michael Shermer.

In my specific case, this ability to organize information and sensation into patterns (real or fake) explains my belief that the tongue map actually worked. I wanted to notice changes in flavour around my mouth. My brain wanted to create patterns and order based on what my teacher told me.

I lie to myself all the time. But I never believe me.

S.E. Hinton, The Outsiders

We all want to be right. I want what I’m telling you right now to be right, I want my belief that Earth is round to be right, and I wanted the tongue map — information I had learned, believed and passed on — to be right. I was invested in the lie. It was a part (a teensy, tiny part) of my identity.

The attachment humans form to lies is responsible for two main flaws in logical reasoning: confirmation bias and the backfire effect.

People want to believe things that fit into their worldview, and when confronted with conflicting information, they will resist. Presenting them with proof they are wrong may lead them to cling to their lie even tighter.

What about completely new subjects? This would exclude confirmation bias, but another effect takes its place: the anchoring effect.

We have a lot of confidence in our ability to find the truth. However, humans underestimate their ability to tell lies and overestimate their ability to detect them. We are all walking around expecting things to be truer than they are.

In a 2003 study, 60 police interrogators guessed their ability to spot a lie as high and their ability to tell a lie as low. In reality, they detected lies at a rate below chance, meaning they would have done better if they stopped trying, and were far better at telling lies than they originally thought. (Elaad)

We are far better at spreading disinformation than we are at spotting it.

“Men occasionally stumble over the truth, but most of them pick themselves up and hurry off as if nothing happened.”

Winston Churchill

What else goes into a lie? We’ve explored their creation — what about their distribution? Why are some lies noticed and questioned, while others remain unchecked? The answer might relate to who told the lie.

Some people in society are considered trustworthy by default; for example, doctors, security guards, and parents. These people have to go the extra mile to lose our trust. They’re the people we find when we’re lost or in crisis and trust to guide us towards good decisions.

They’re also the people whose thoughts and direction we hold most dear. The Milgram experiment, performed in 1961, made everyday people run increasing volts of electricity through other people, by turning a knob. They did it for the sole reason that someone in a white coat told them to. Nobody was ever shocked, and all pain reactions were acted, but the people following orders didn’t know that. (Rom)

If all it took was a lab coat to get subjects to harm another person, it stands to reason it takes a lot less authority to spread a low stake claim like the tongue map.

Society often rewards behaviour that aligns with the rest of the group. It can be useful, when making collective decisions, but it can also discourage individual critical thinking.

Everybody in my class believed there was a tongue map, so it was easier for me to believe it too.

Scroll down for this chapter’s lecture on applying what you learned to your own life.

Lecture: We are what we believe.

Kathleen Phillips

We want to believe what society and instinct tell us.

We NEED to believe rational, fact-based information.

A 2009 study from the University of California found the average American consumes 34 gigabytes of data a day. We spend eight hours of that day sleeping… at least, ideally. That can be translated into 100,000 words of information in 16 hours, or 6250 words per hour of raw information. For context, if you made it to this lecture, you’ve only read about 2000 words.

That doesn’t mean we process or store all that data, but we do decide what to process and how to process it. We make thousands of unconscious snap decisions about what to hold on to, what to dismiss, what is true, and what is false.

All of these snap decisions shape how we view the world and the people in it. The information we choose to accept as truth shapes who we become as a human being, so what happens when some of that truth is actually a lie?

There are the harmless socially acceptable lies, the tongue map for example, and then there are radical or harmful lies, like racial superiority. With so much information bombarding us, we are unable to rationally piece everything together, so we rely on crutches like patternicity and trust in authority to bridge the gaps.

These crutches support vastly different world views. We’re all working with the same materials but painting different portraits.

All these different world views and all the lies and truths they are made of stem from the same human shortcomings. The failure of rational thought which made me argue the tongue map was real, can help explain the behaviour of cult members and terrorists. It’s all in degrees.

Someone with authority repeated information that reinforced our world view. We didn’t want that to crumble, so we clung to the information’s rightness in the face of confrontational facts. We found patterns, we survived.

I used the example of the tongue map as my lie because it is an easy one to debunk. It’s a straightforward lie with a clear-cut history. It also has low stakes. Letting it go was not a hardship.

It did make me question what other lies I adopted as truth. Perhaps others were less harmless.

In this lecture, I can’t process all of my possible failures in rational thinking. You don’t need, or want, to hear about them. I do recommend going through the process yourself. Question your own beliefs. I dare you. What lies masquerade in your world view?

I should take a moment as I barrel toward the end to clarify one thing: I don’t think that belief without rational evidence, otherwise known as faith, is necessarily wrong. I am arguing it can be — particularly when the believer is not aware they are taking a leap of faith.

So, what can we do?

I can’t give you a definitive guide. And I don’t want to encourage you to become so overly introspective that you drive yourself, and probably everyone around you, insane. I am suggesting that you do a few things, whenever possible.

One: Question authority. I’m not saying shout f—- the police and join an anarchist group (unless that’s where the truth leads you, in which case rock on), but maybe think twice before blindly turning the knob because someone in a white coat told you to.

Take a second look. Challenge something you know or let someone else challenge it for you.

Two: Search out new information. If it’s from a seemingly credible source and it makes you uncomfortable, it might be worth a second look. Look into how the author supports their claims, and think critically about the information and where it came from.

Three: Get the details. If you can’t find specifics, it’s probably not credible. Remember that we have the ability to find patterns in information where there aren’t any. The scope of known information also changes daily. Keep it hip.

Finally, keep in mind that the truth might not be all you hoped for. In the words of Gloria Steinem, “The truth will set you free, but first, it will piss you off.”

It’s a crazy world out there, full of information in all different forms. We’re all just sifting through that information, doing our best to craft it into a world view. So, the next time someone mentions a tongue map, be kind when you tell them the truth. They have a good reason for telling a lie.