In my Grade 12 Model UN course, my teacher presented three AI-generated images to the class: China’s newly discovered time machine, Switzerland’s impenetrable defence bubble, and dinosaurs addressing the United Nations council.

My classmates and I laughed at the mixture of realism and absurdity in the bizarre AI-generated images, and a class of teenagers were engaged in a lesson with important learning outcomes.

Manitoba teachers are experimenting with AI tools to generate lesson plans, create visuals, or complete organizational tasks. Some teachers see AI as a resource to reduce workload, while others worry about ethical and privacy concerns.

If used carefully, AI can give teachers back something they rarely have enough of, time. Time to teach with intention, to spend with family and friends, or to better connect with their students. But when teachers over-rely on AI and use it to replace their own pedagogical knowledge, they risk crossing foggy ethical boundaries.

As the government and some school divisions still lack guidelines or AI policies, practices of how educators can use it effectively and ethically varies from classroom to classroom, and the burden of navigating these murky waters falls on individual teachers. The question of if and how to use AI has never been more urgent, or less clear.

How Teachers are Navigating AI

AI has already become a part of how teachers plan and prepare for their classes.

Eighty-seven per cent of educators have used AI, though less than half of them feel confident using it according to a report by Actua, a Canadian non-profit that promotes STEM. The same report found 65 per cent of teachers believe that not having access to sufficient instructional resources or tools is a barrier to being better prepared to use AI effectively. While lots of teachers may use AI, many are still learning how to integrate it into their work.

Actua’s report also shows that teachers are using AI for tasks like translations, report card comments, grading, scheduling, and administrative tasks. Teachers also report using AI to draft learning plans for students to help adapt lessons to their different skill levels and needs.

While there are education-specific AI tools like Goblin Tools — an AI program that helps you plan lessons, create resources, and explain difficult concepts in simpler language — ChatGPT is still the go-to for teachers. Seventy-three percent of teachers are sticking to ChatGPT, according to a report published on Multidisciplinary Digital Publishing Institute, which makes sense since ChatGPT offers a free version only for teachers.

The Benefits of New Technology

Serena Garcea is a supply teacher who often fills in for teachers who are on leave. When Garcea filled in for a Grade 3 teacher at West St. Paul School, she wasn’t given lesson materials and had to create them on her own. Since most of her day is spent with students, Garcea has little time to prepare lessons between classes. She often had to prepare slideshows, worksheets, tests and grade assignments outside of school hours.

According to the Winnipeg Teachers’ Association, schools must schedule a minimum of 30 minutes of preparation time per day for a full-time teacher.

“In terms of teaching, your job never stops,” says Garcea. “It stops at work when I’ve printed everything for the next day, but my mind’s constantly running. I keep a notebook beside my bed just in case I get an idea that I want to write down for the next day.”

Planning after-hours is what pushes teachers like Garcea towards AI content generation. AI can spit out a worksheet or lesson plan in a fraction of the time it would take a human to develop .

Garcea says she uses AI to come up with engaging activities that incorporate learning goals, as well as making concepts accessible to a Grade 3 classroom. This includes changing tough words into plain language and creating simple images to keep her students engaged.

Garcea says the school administration at West St. Paul has encouraged her and others to use AI to streamline lesson planning. Now, Garcea works at Margaret Park Elementary as a supply teacher where she fills in for a variety of grade K-5 classes. Garcea says teachers there are split on who does and doesn’t use AI.

Garcea says the demands of her job have negative impacts on her mental health, and over 55 per cent of teachers said they strongly agree their work has consequences on their mental health in a report by the Canadian Teachers’ Federation (CTF/FCE).

Garcea says AI helps relieve the burnout. She says it would take her almost an hour to produce what ChatGPT did in seconds.

To test AI’s efficiency, I asked ChatGPT to “Generate me a short lesson plan with an activity for a 50-minute timeslot on beginner French terms for a Grade 3 classroom.” It generated a full lesson plan and activity, including instructions on how to set it up, in about 21 seconds.

Despite the efficiency, the lessons do have their faults. The AI-generated lesson was timed out poorly, implying it would take 10 minutes for students to turn to someone sitting beside them and say “Je m’appelle ____.” However, it included ideas for many engaging activities, and gave ideas on ways that students could show their learning by the end of the lesson, and even tiered learning for struggling or advanced students. ChatGPT then offered to generate flashcards, more activities, and even a full week mini-unit.

Grading students’ work can be more efficient with AI. Teachers can upload screenshots of students’ completed worksheets and AI can mark it and give the students a score. For teachers with big classes, it can take away the repetitive job of cross-referencing answer keys with student tests.

But Garcea says she’d never dream of using AI to grade her students’ work. She deems it unethical and thinks it’s work that teachers should be doing, not AI.

The Risks of AI

While AI’s potential as an efficient tool is high, there are also some pretty big downsides to using it.

A brain is like a muscle; if we don’t use it often enough, it gets weaker. In an article from the Harvard Gazette titled “Is AI dulling our minds?” the main concern is the effect on our critical thinking skills deteriorating from over-reliance on the technology.

According to a study done by the MIT Media Lab in 2025, over–reliance on AI-driven solutions can contribute to cognitive atrophy — a symptom seen in neurodegenerative diseases like Alzheimer’s disease.

AI also has the ability to plagiarize other work. Scott Plantje, a Grade 10 teacher at the Seven Oaks MET School (SOMET) with 15 years of experience, says he’s previously used AI to generate names of fake Pokémon to correspond to different elements of the periodic table for his Grade 10 students. Unbeknownst to Plantje, the AI had plagiarized a real Pokémon name.

While Plantje isn’t on Pokémon’s radar, the issue illustrates the risks associated with AI generated content and copyright.

Using AI also has environmental disadvantages. Large data centers, like the ones run by OpenAI and Google, can consume up to almost 1.9 million litres of water a day according to a report by the Environmental and Energy Study Institute.

AI can also spread bias found online.

“The data sets on which algorithms are trained on often underrepresent certain groups of people,” writes Ashwini K.P. in a UN General Assembly report. “If particular groups are over- or underrepresented in the training sets, including racial and ethnic lines, algorithmic bias can result.”

Over 75 per cent of students have come across racist or sexist content online BC’s Office of the Human Rights Commissioner reports. If AI were to consume and use this biased info, it’s results would reflect that. If the materials are pushed to students, they could be ingesting biased facts, altering how they think about certain scenarios and spreading misinformation.

Another big concern with AI is user privacy, any sensitive data entered into AI platforms runs the risk of being retained and influencing future AI outputs, according to the National Cybersecurity Alliance’s article on AI and data privacy: “Think of AI like social media: if you wouldn’t post it, don’t enter it into AI.”

It’s not just that AI programs retain your data. A report by Koi, a digital security platform, shows that conversational data with LLMs like ChatGPT, Claude, Gemini, and many others can be harvested not just by the AI itself, but also by certain Chrome extensions. These VPNs could sell conversational data on the web.

If a teacher uploads a student’s information through an assignment, the AI can store that information and could potentially sell it to other third-party companies.

While there aren’t any policies to prevent sharing of personal data, Matt Henderson, superintendent of the Winnipeg School Division, told the Winnipeg Free Press that he advises against putting personal, confidential, or sensitive information into AI.

Weighing the Ethics of AI

David Zynoberg, a coordinator at the Seven Oaks MET School, attended the Artificial Intelligence in Education Summit in January 2026. During the summit, Zynoberg says the speaker talked about a phenomenon that happens with educators and philosophers as groundbreaking technology enters the education setting. The speaker used the example of the printing press. Zynoberg says there were quotes that alluded that written text would make people dumber, questioning why we would have to hold our thoughts when paper could do it for us.

Thirty-nine per cent of teachers abstain from using AI due to ethical concerns, according to the Actua report. Ethics are at the top concerns for teachers around AI technology; how are organizations quelling teachers’ distress and implementing guidelines on AI?

Manitoba is still working on developing an AI framework for educators, so there are no default guidelines or laws to follow. However, many organizations have brought it upon themselves to create guidelines for their staff. The Canadian Teacher’s Federation has created a brief on AI governance in Canadian public education. The brief covers topics like privacy concerns of students and teachers, negative impacts on critical skills, and mental health concerns due to exposure of harmful generative AI content.

The CTF/FCE recommends all AI usage for classroom materials should be monitored and that teachers should be transparent and disclose all AI usage. No personal data should be collected from students, nor should any student data be sold.

The United Nations Educational, Scientific and Cultural Organization (UNESCO) also released a publication, AI and education: guidance for policy-makers. The resource is to help administrators and even local governments create rules. The publication covers topics like AI benefit-risk assessment, reviews of policy responses, and recommendations for governments and administrations for governing AI use.

The University of Alberta created ethical considerations for using generative AI. The guide also includes some questions to ask yourself when prompting AI:

- Have I weighed my intended use of generative AI against the environmental/ecological impacts?

- What are the copyright implications of using others’ content in a generative AI tool?

- How will I verify the generated content to make sure it is credible?

- How will I address the biases that may shape the generated content?

These questions apply to all people before using AI. More locally, the Louis Riel School Division announced they have established a Data Governance Working Group to create AI guidelines and shape how data and technology are used across the division.

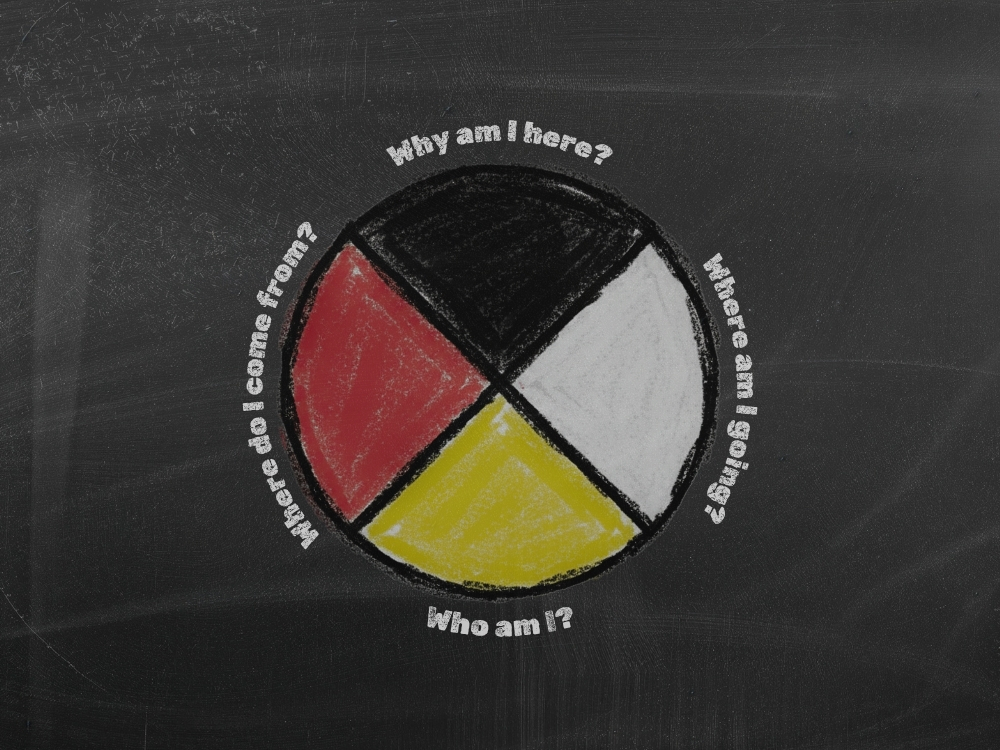

Manitoba’s largest school division, the Winnipeg School Division, has also set an AI Thinking Framework. The framework incorporates the late Murray Sinclair — Manitoba’s first Indigenous judge and chair of the Truth and Reconciliation Commission of Canada — and his Four Questions: Where do I come from? Where am I going? Why am I here? Who am I?

The framework states that AI has difficulty answering these because of how personal and how human the questions are. Teachers are invited to ask these four questions to ground every AI use in identity, purpose, and community, ensuring that technology serves reconciliation and human flourishing.

The Winnipeg School Division also has its own questions to help us critically reflect on AI usage alongside Truth and Reconciliation:

- Honouring Story & Place: How can AI help us listen to and amplify Indigenous voices, while ensuring that knowledge remains community-owned and culturally protected?

- Learning from the Past for the Future: In what ways can AI-assisted archives, translations, or storytelling tools help staff and students connect past injustices to present responsibilities, and chart a more just path forward?

- Relational Accountability: How will we ensure that any AI use — whether in curriculum or operations — builds authentic relationships with Indigenous communities and does not replace the human work of reconciliation?

All these guidelines are key for teachers to help structure AI usage. Restrictions help teachers avoid repercussions on AI use, like copyright infringement or sharing the personal and private data of themselves or others. The guiding questions help teachers weigh the value of ethics involved in a simple AI prompt.

Looking Ahead

With AI leading to concerns of privacy violations, environmental deterioration, and even symptoms seen in Alzheimer’s, many organizations are still figuring out a way to use it safely.

In Manitoba, the province’s Artificial Intelligence in Education Summit was a first step and the forthcoming guidelines on AI use for educators that were announced will be the next step. Tracy Schmidt, Minister of Education and Early Childhood Learning, said in an email that she “will be working with external partners to develop Guiding Principles on AI in Education to support educators in integrating AI in a way that’s strengthen student learning through high-quality teaching and learning experience.” There is no release date yet for The Guiding Principles on AI in Education.

While it still may be some time before there are any official government policies on AI in education, schools and teachers already have structured guidelines and have adapted to using AI.

We are watching a technological revolution unfold within the educational realm. Like the printing press, it’s only a matter of time before AI is adopted fully into the classroom, whether teachers like it or not. The real concern is whether the education system will adapt alongside it, or if we’ll have to continue to rely on teachers to absorb the consequences of that change on their own.

For now, teachers like Garcea and Plantje — and their students — will continue to be on the front lines navigating the real interplay between AI and the relationships that are at the heart of education.